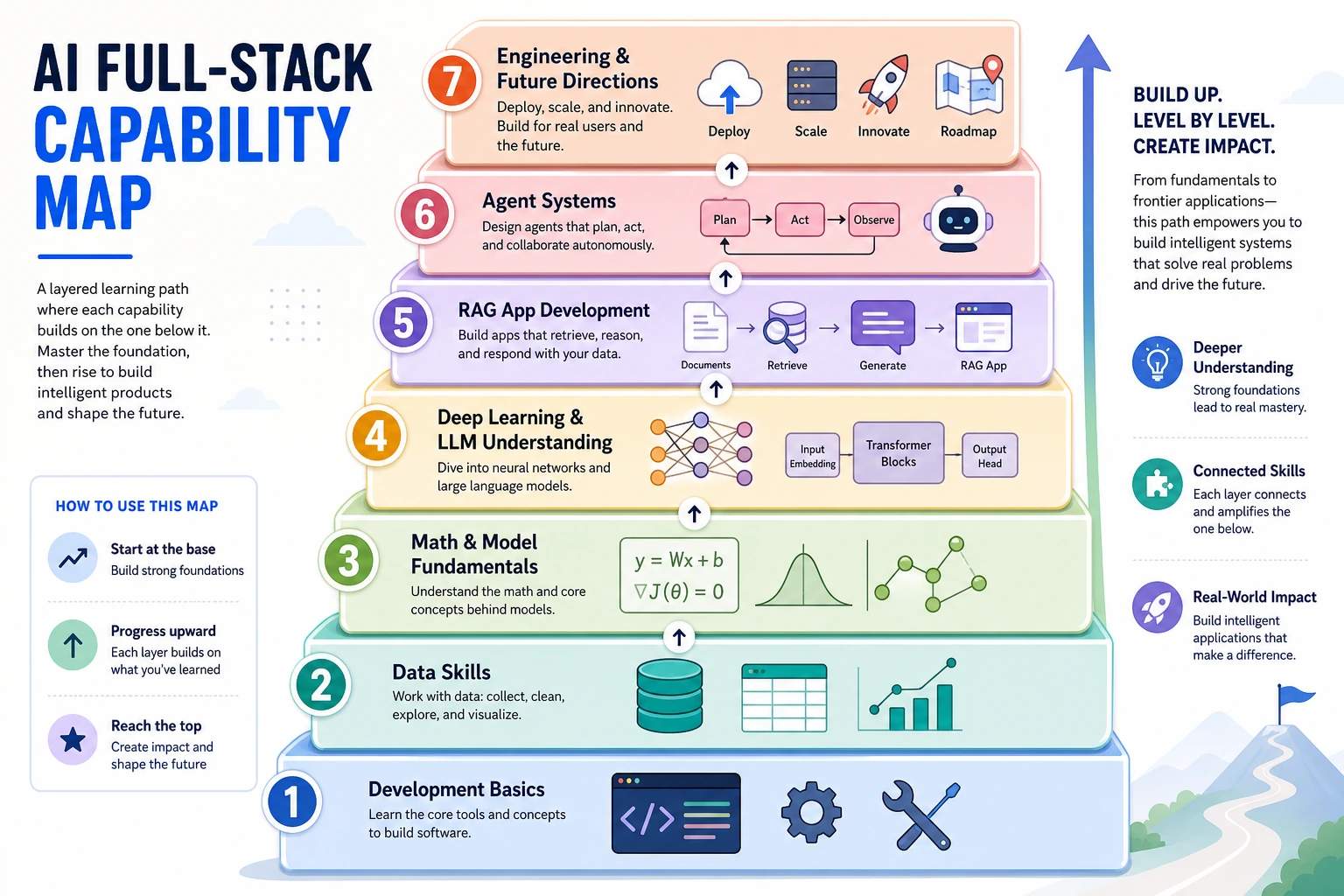

0.3 AI Full-Stack Capability Map

Read the picture first. The course is one path:

tools -> Python -> data -> models -> LLM -> RAG -> Agent -> specialization/delivery

You do not need every detail now. Just remember:

| If you are blocked by... | Go back to... |

|---|---|

| running code | tools and Python |

| messy inputs | data |

| unreliable answers | evaluation and RAG |

| uncontrolled actions | Agent traces and permissions |

The Seven Layers

| Layer | Course chapters | First visible evidence | Deeper question |

|---|---|---|---|

| Tools | 1 | A reproducible project folder and Git history | Can another person rerun it? |

| Python | 2 | Small scripts with clear inputs and outputs | Is the code readable, typed, and testable? |

| Data | 3 | Clean tables, charts, and notes | Do you know where the data is wrong or biased? |

| Models | 4-6 | Trained or inspected model experiments | What metric would change your decision? |

| LLM | 7 | Prompt, tokens, embeddings, Transformer intuition | Which behavior comes from data, decoding, or context? |

| RAG | 8 | Retrieval trace and answer evaluation | Did the answer use the right evidence? |

| Agent | 9 | Tool traces, permissions, memory notes, deployment notes | What can fail when users, files, and actions are real? |

| Specialization / delivery | 10-12 and electives | Vision/NLP/multimodal demos, exported assets, deployment notes | Which domain constraints change the product decision? |

The course is not a pile of topics. It is a debugging stack. When an AI application behaves badly, the cause may live several layers below the feature you are looking at.

Main Line And Expansion Tracks

Use Chapters 1-9 as the default main line. After Chapter 9, you should be able to build a small LLM/RAG/Agent project with evidence, logs, and a safety boundary.

Then choose Chapters 10-12 by product need:

| Need | Choose | Why |

|---|---|---|

| Images, cameras, OCR, detection, segmentation | Chapter 10 Computer Vision | The output is visual: labels, boxes, masks, text, or video events |

| Text labels, extraction, summaries, linguistic evaluation | Chapter 11 NLP | The output is a text task with labels, fields, spans, or generated text |

| Images, PDFs, audio, video, creative assets, multimodal RAG | Chapter 12 Multimodal/AIGC | The workflow mixes modalities and needs source, prompt, review, and export records |

| Deployment, advanced Python, classic ML depth | Electives | The main project needs a specific engineering or algorithmic side skill |

How To Use The Map

Before starting a project, mark the highest-risk layer. For example, a PDF question-answering app usually fails first in data cleaning and retrieval, not in the chat UI. An automation agent usually fails first in tool permissions, state, and evaluation, not in the prompt wording.

During each chapter, keep one artifact that proves the layer works. Screenshots are useful, but logs, README commands, small datasets, metric tables, and failure notes are stronger because they help you debug later.

Optional background: if you want the history behind these layers, skim the 15-stage AI development map.

Next, choose a learning path.