4 AI Math: the Minimum Necessary Foundation

Chapter 4 has one job: make the math inside models feel like tools you can run and explain, not a wall of formulas.

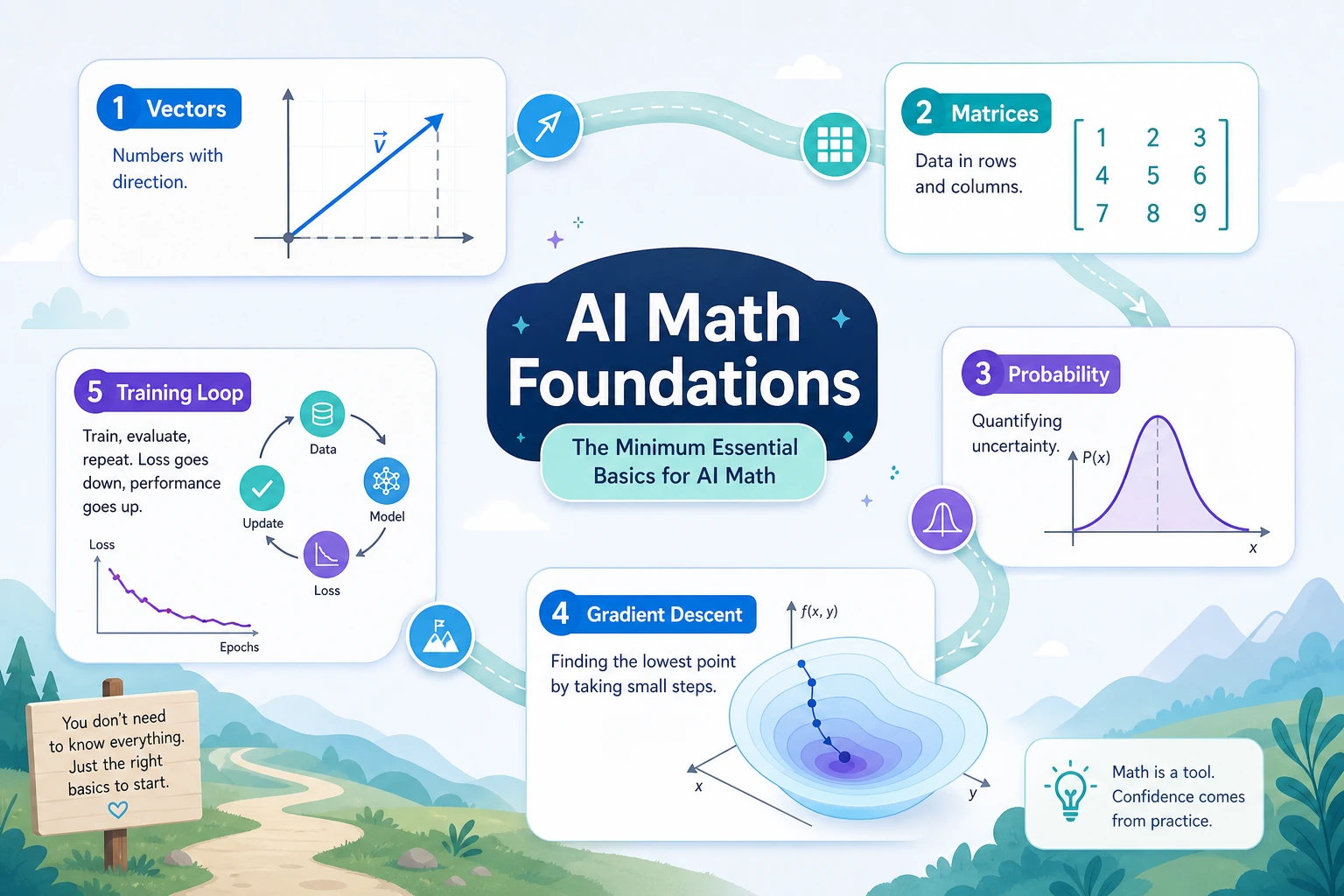

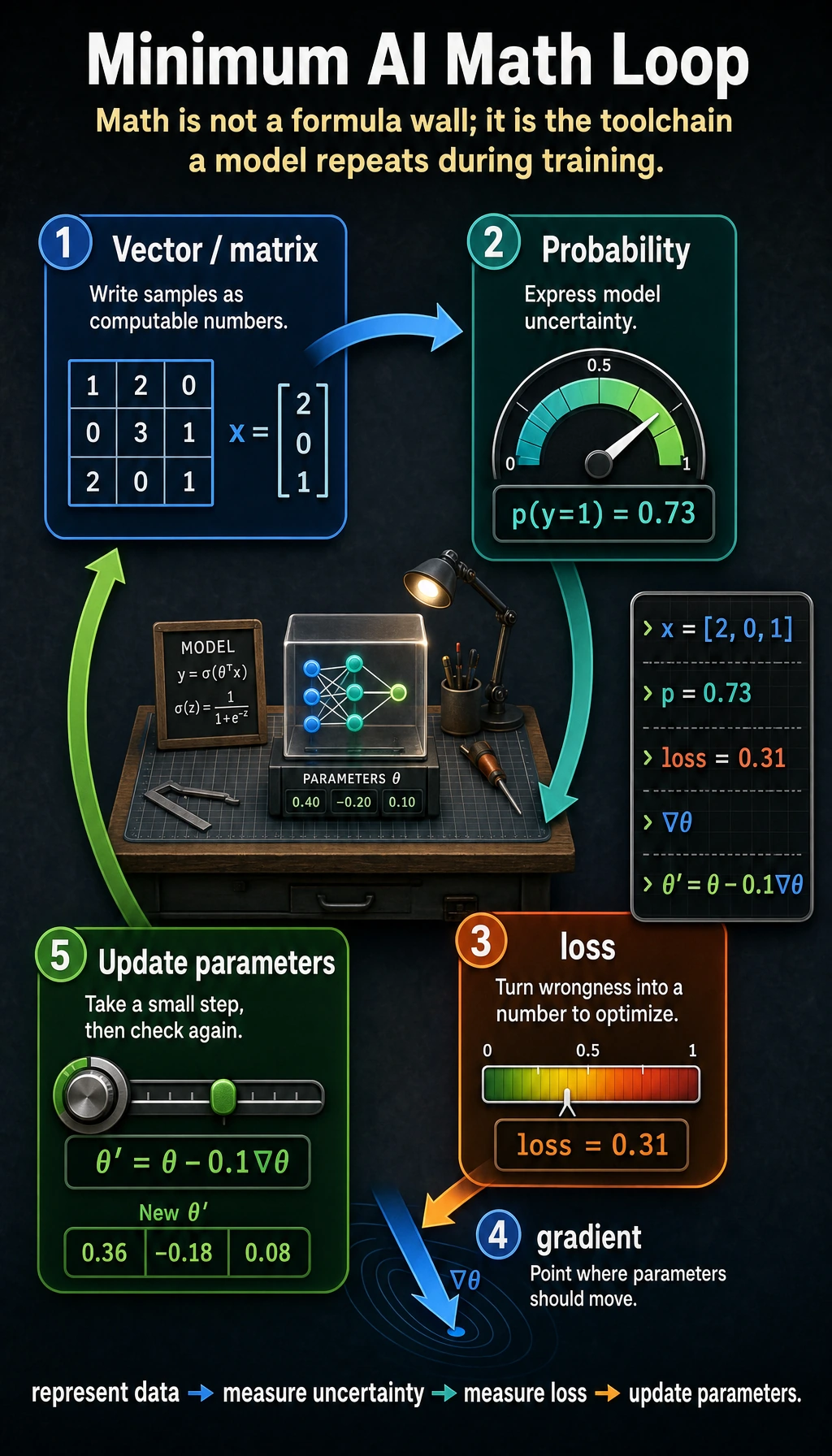

See The Model Math Loop

Read the picture first. Most AI math in this course supports one loop:

represent data -> measure uncertainty -> measure loss -> update parameters

Vectors and matrices represent data. Probability describes uncertainty. Loss tells the model how wrong it is. Gradients tell it how to improve.

Learning Order And Task List

Study the theory first, then run the full workshop. The workshop is last because it combines the ideas rather than introducing them from zero.

| Page | Follow-along action | Evidence to keep |

|---|---|---|

| 4.1 Linear Algebra | Use vectors, matrices, dot product, norm, and cosine similarity to compare examples | One vector similarity calculation |

| 4.2 Probability and Statistics | Simulate uncertainty, distributions, mean, variance, entropy, and loss | One probability or entropy note |

| 4.3 Calculus and Optimization | Trace derivatives, gradients, learning rate, and gradient descent | One parameter-update table |

| 4.4 Hands-on Math Workshop | Connect vector similarity, probability, entropy/loss, and gradient descent in one runnable script | ch04_math_workshop_evidence/ |

Key terms for this chapter:

| Term | Meaning |

|---|---|

Embedding | A vector representation of text, images, users, or items |

dot product | How much two vector directions agree |

norm | Vector length or strength |

entropy | Uncertainty or surprise |

loss | A number that measures model error |

gradient | The direction that changes a value fastest |

GD / SGD | Gradient descent / stochastic gradient descent: walking downhill on loss |

First Runnable Loop

Install NumPy if needed:

python -m pip install numpy

Then run this script. It shows why vector similarity matters before you meet Embeddings and retrieval.

import numpy as np

python_topic = np.array([1.0, 1.0, 0.0])

data_topic = np.array([1.0, 0.8, 0.2])

unrelated_topic = np.array([0.0, 0.1, 1.0])

def cosine(a, b):

return a @ b / (np.linalg.norm(a) * np.linalg.norm(b))

print("Python vs data:", round(cosine(python_topic, data_topic), 3))

print("Python vs unrelated:", round(cosine(python_topic, unrelated_topic), 3))

Expected output:

Python vs data: 0.982

Python vs unrelated: 0.071

The code is small, but the idea returns later in Embeddings, retrieval, recommendation, attention, and RAG.

Depth Ladder

| Level | What you can prove |

|---|---|

| Minimum pass | You can run a vector similarity example and explain what each dimension means. |

| Project-ready | You can connect vector, probability, loss, and gradient to one model action instead of treating them as separate formulas. |

| Deeper check | You can change one input or learning rate, predict the direction of the result, then verify it with code. |

Common Failures

| Symptom | First thing to check | Usual fix |

|---|---|---|

| Formula feels abstract | What model action does it support? | Translate it into represent, compare, measure uncertainty, measure loss, or update |

| Vector examples feel arbitrary | What does each dimension mean? | Write labels for dimensions before calculating |

| Probability terms blur together | What is random, and what is the event? | List samples, outcomes, and probabilities in a tiny table |

| Gradient descent diverges | Learning rate is too large | Plot or print loss each step and lower the rate |

| Workshop feels like magic | Theory was skipped | Read the 4.1, 4.2, and 4.3 roadmap pages first |

Pass Check

Move to Chapter 5 when you can answer these five questions:

- How can one sample become a vector?

- Why can a model output be read as probability or confidence?

- What does loss measure?

- How does a gradient tell parameters which way to move?

- Can you run 4.4 Hands-on Math Workshop and explain the generated files?

For a printable checklist, use 4.0 Study Guide and Task Sheet. The next chapter grounds these math ideas in sklearn model training and evaluation.