5.6.1 ML Projects Roadmap: Baseline, Evidence, Improvement

This chapter is the exit point of Chapter 5. It proves you can turn a data problem into a modeling workflow that can be evaluated, explained, and shown in a portfolio.

Look at the Project Loop First

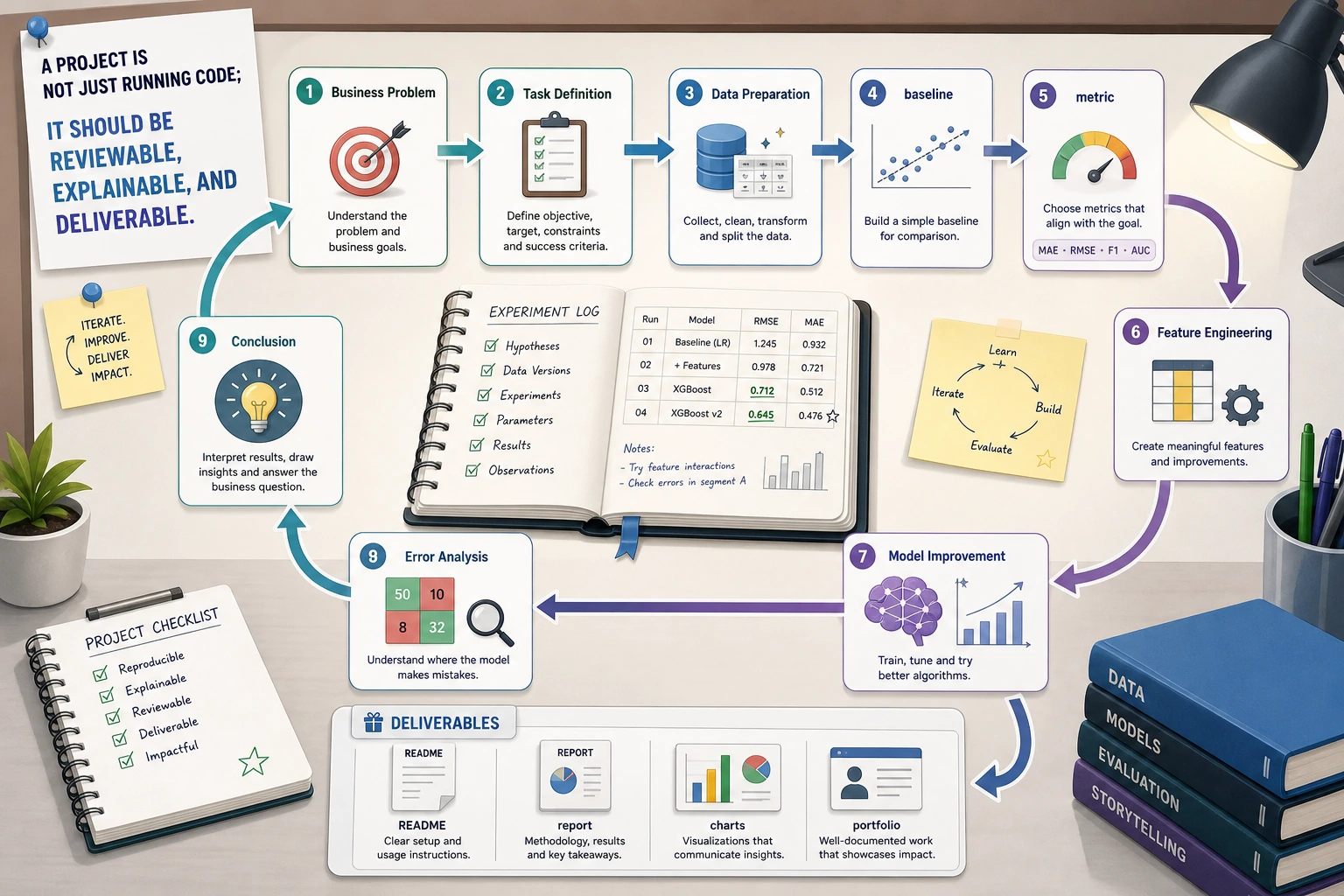

Keep this project loop:

problem -> data -> baseline -> metric -> improvement -> failure cases -> report

Do not jump straight to a complex model. A project without a baseline, metric, and failure analysis is only a demo run.

Keep One Experiment Log

Create ml_project_log_first_loop.py. This is not a model; it is the habit every model project needs.

experiments = [

{"version": "v1_baseline", "metric": 0.72, "change": "default model"},

{"version": "v2_features", "metric": 0.78, "change": "add ratio features"},

{"version": "v3_tuned", "metric": 0.80, "change": "tune max_depth"},

]

best = max(experiments, key=lambda row: row["metric"])

print("best_version:", best["version"])

print("best_metric:", best["metric"])

print("next_step: inspect failure cases before adding more models")

Expected output:

best_version: v3_tuned

best_metric: 0.8

next_step: inspect failure cases before adding more models

This is the mindset shift: from "I ran a model" to "I can compare versions and explain the next step."

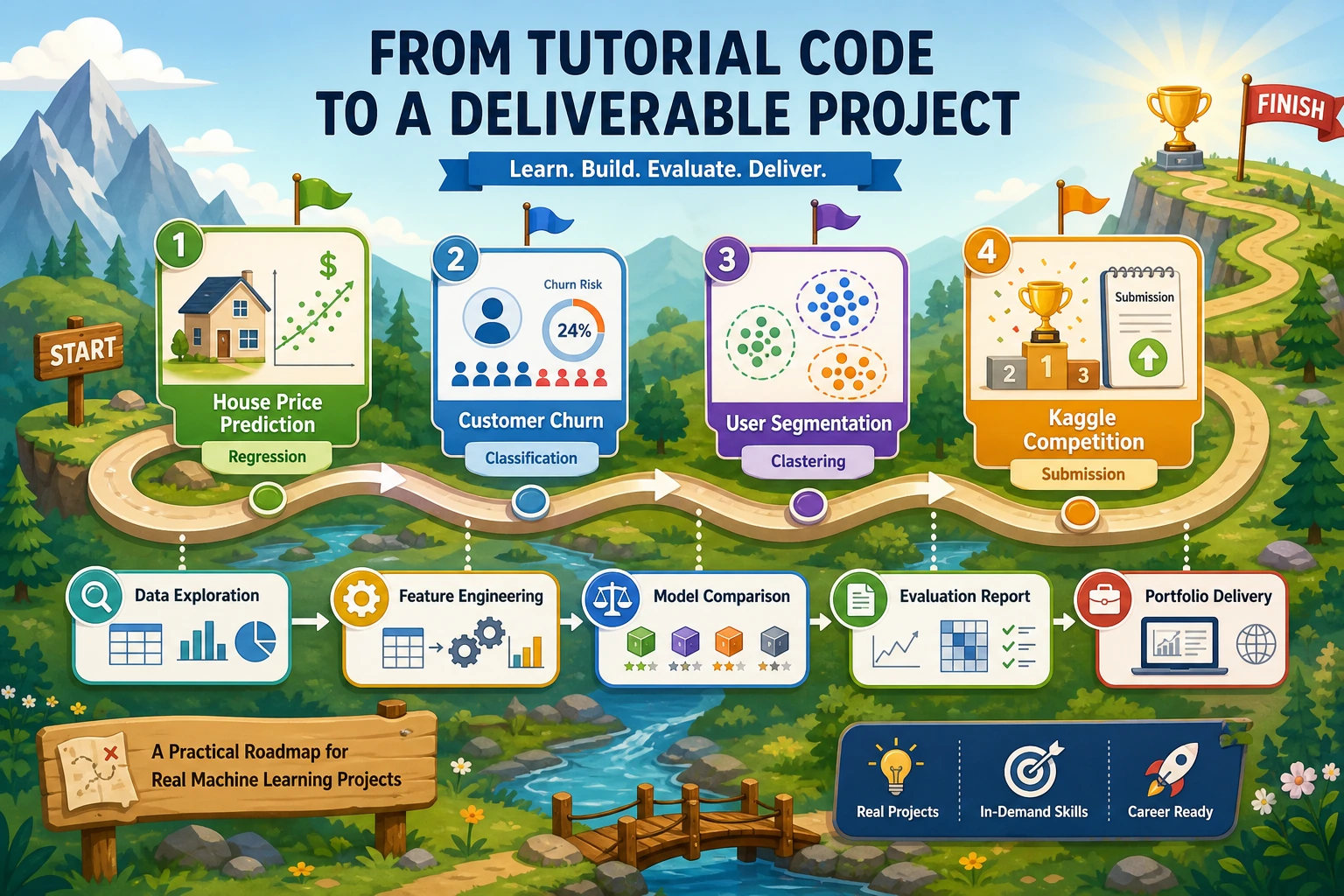

Learn in This Order

| Order | Read | What to deliver |

|---|---|---|

| 1 | 5.6.2 House Price Prediction | regression baseline and improvement |

| 2 | 5.6.3 Customer Churn Prediction | classification metric and threshold thinking |

| 3 | 5.6.4 User Segmentation | cluster interpretation and business labels |

| 4 | 5.6.5 Kaggle Practice | real submission workflow |

| 5 | 5.6.6 Hands-on ML Workshop | one complete evidence pack rehearsal |

The workshop comes last because it packages the project habits into one reproducible evidence pack.

Project Deliverable Standards

Keep these files for at least one project: README.md, run command, metric table, experiment log, one failure case, one chart, and a next-step plan.

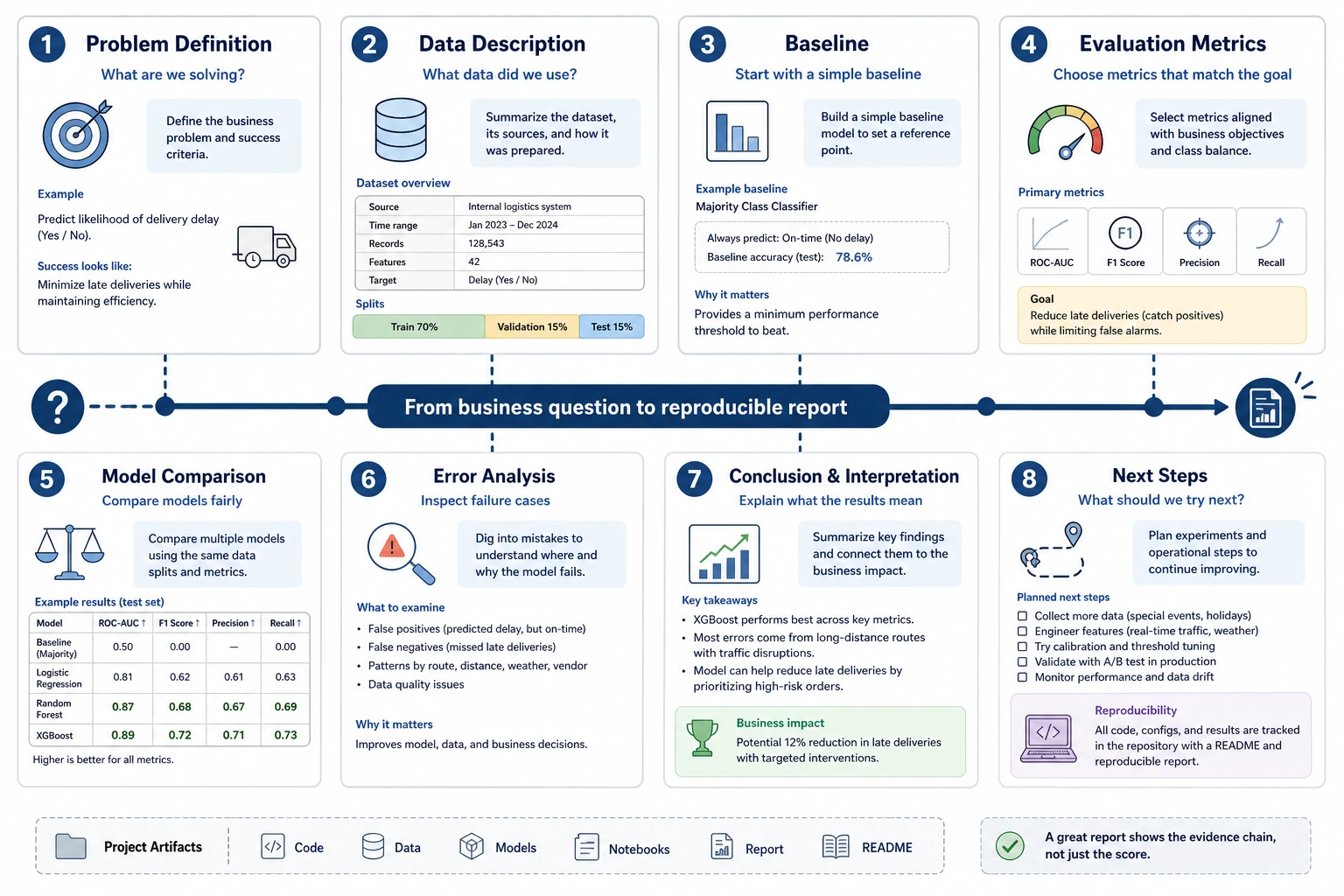

Pass Check

You pass this roadmap when you can clearly say: how I defined the task, what baseline I used, which metric I trusted, what improved, where the model failed, and what I would do next.