8.4.1 Engineering Roadmap: Async, API, Logs, Deploy

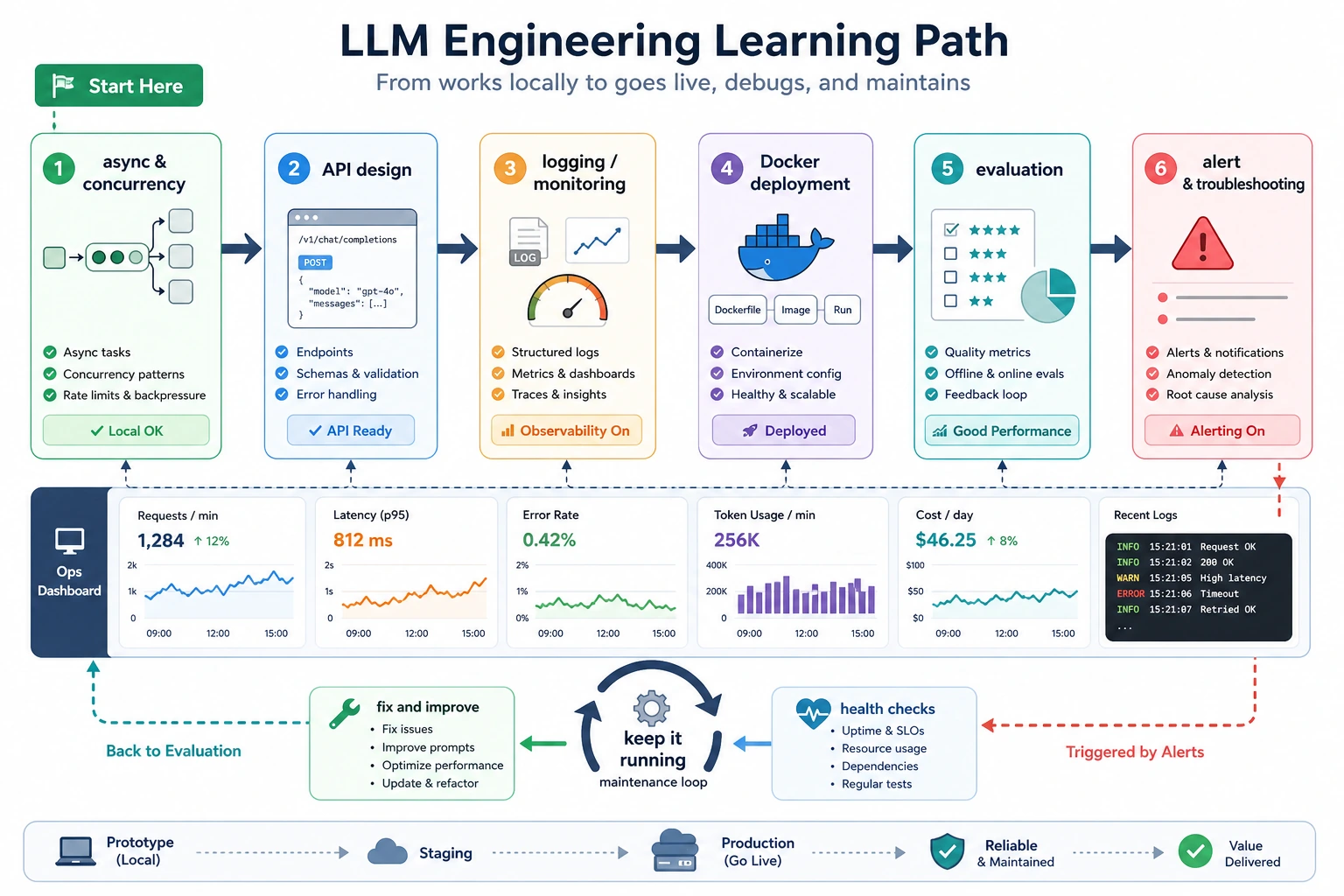

Engineering turns a working LLM demo into software that can be deployed, debugged, measured, and maintained after prompts, models, documents, and users change.

See the LLMOps Loop First

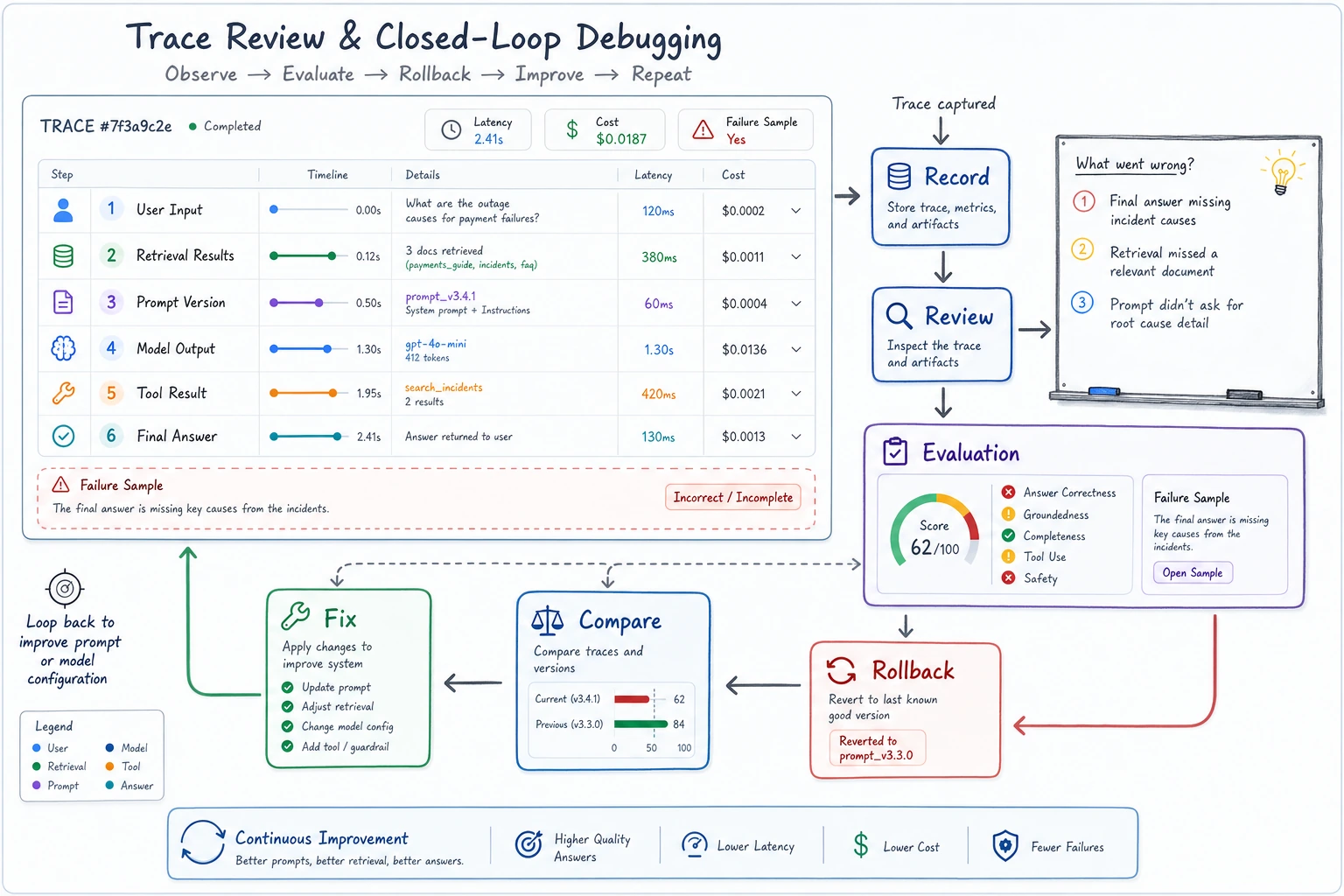

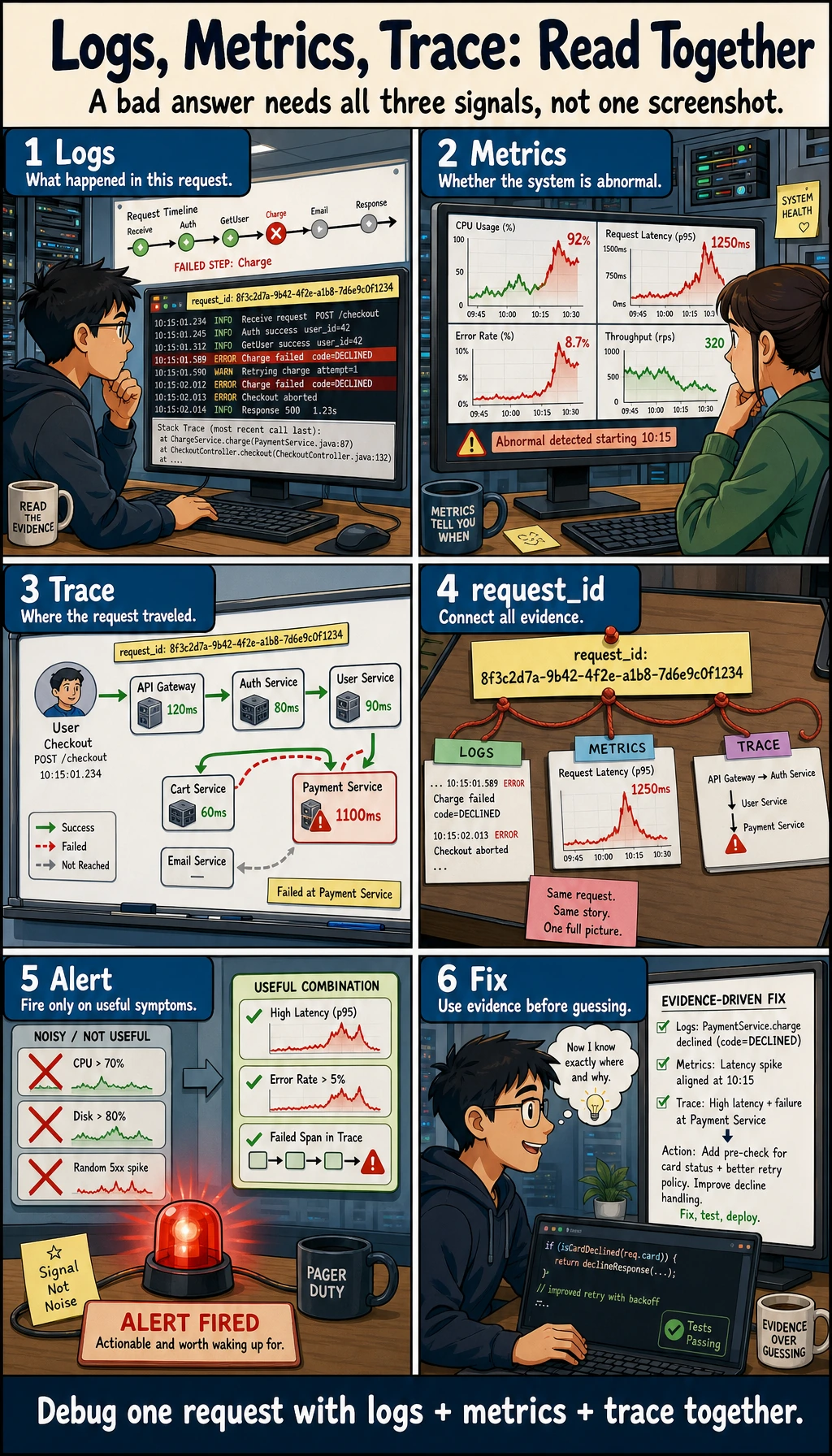

Your first engineering goal is simple: when an answer is wrong, you can explain which layer caused it.

Run a Trace Readiness Check

Every production-style LLM feature needs enough trace fields to debug one bad answer.

trace = {

"request_id": "demo-001",

"prompt_version": "rag-v2",

"retrieval_hits": 2,

"model_ms": 850,

"format_ok": True,

"cost_usd": 0.003,

}

required = ["request_id", "prompt_version", "retrieval_hits", "model_ms", "format_ok", "cost_usd"]

print("trace_ready:", all(field in trace for field in required))

print("debug_fields:", ", ".join(required))

Expected output:

trace_ready: True

debug_fields: request_id, prompt_version, retrieval_hits, model_ms, format_ok, cost_usd

If these fields are missing, debugging becomes guesswork. Add logs before adding more features.

Learn in This Order

| Step | Read | Practice Output |

|---|---|---|

| 1 | Async programming | Add timeout, retry, concurrency limit, and cancellation thinking |

| 2 | API design | Define request/response schema and error codes |

| 3 | Logging and monitoring | Record prompt version, retrieval hits, latency, cost, and failures |

| 4 | Docker deployment | Package the app with reproducible run instructions |

Pass Check

You pass this chapter when your minimal app has a run command, API contract, error handling, logs, and one documented failure investigation.

The exit mini project is an engineering evidence pack: one trace log, one common error, one fix, one regression check, and one deployment note.