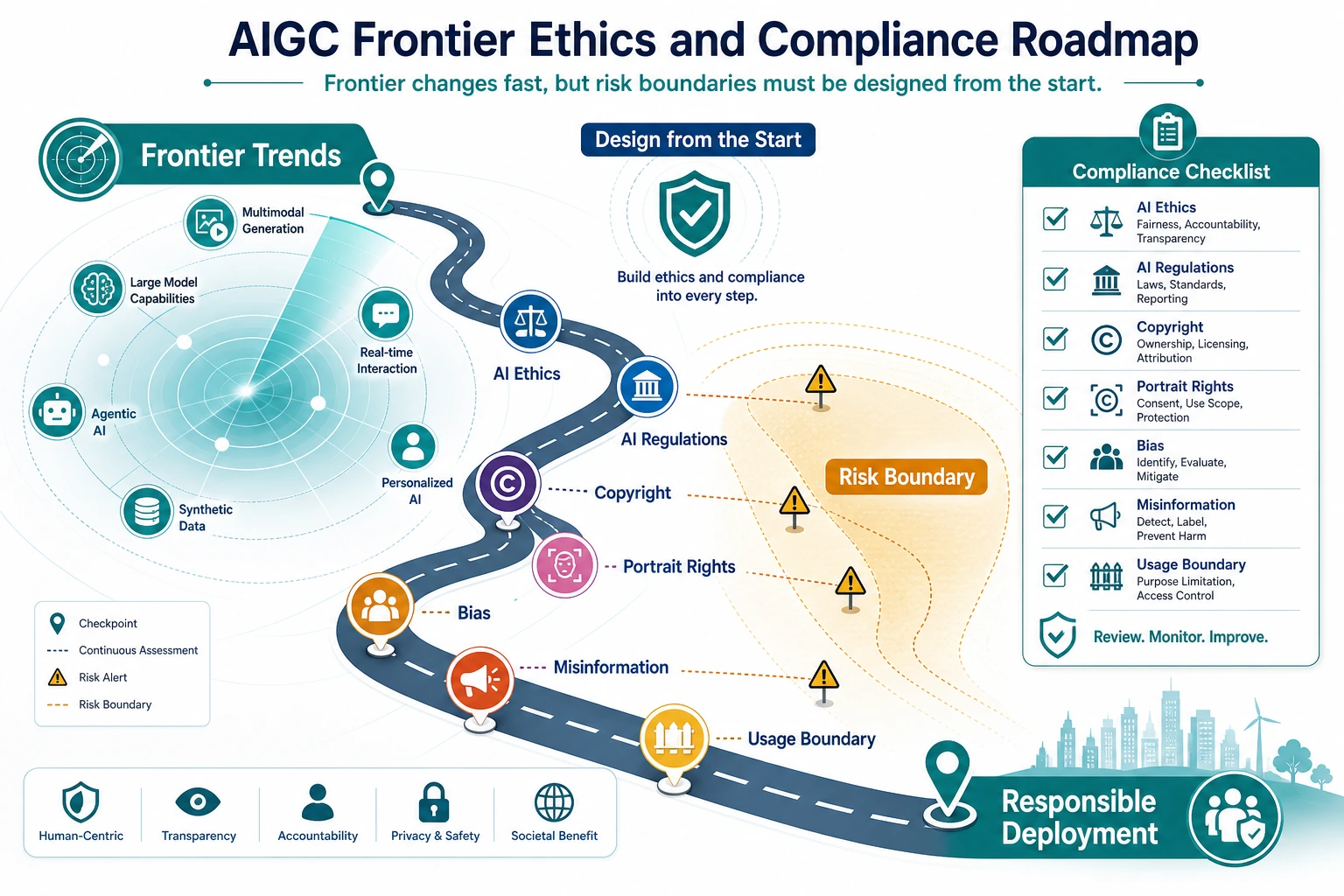

12.4.1 Frontier and Ethics Roadmap: Risk Before Release

Responsible AIGC is not a disclaimer at the end. It is a workflow that checks material sources, people, voices, synthetic labels, sensitive content, and human review before export.

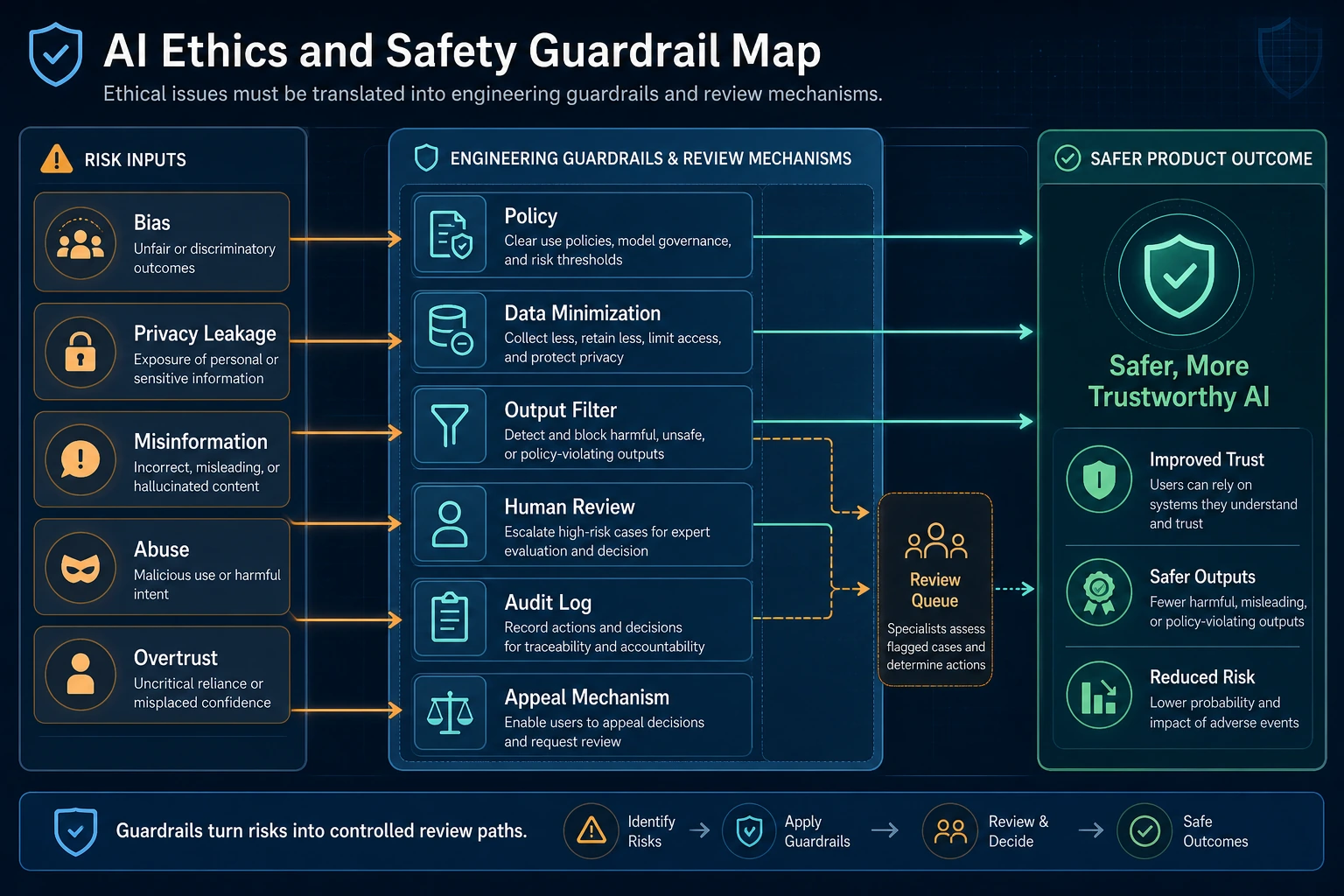

See the Guardrails First

The first habit is to ask what should be blocked, what should be limited, and what needs human confirmation.

Run a Risk Checklist

request = {

"uses_real_person": False,

"uses_cloned_voice": True,

"licensed_assets": True,

"synthetic_media": True,

}

checks = []

if request["uses_cloned_voice"]:

checks.append("voice authorization")

if request["synthetic_media"]:

checks.append("synthetic content label")

if not request["licensed_assets"]:

checks.append("asset license review")

decision = "human_review_required" if checks else "ready_to_export"

print("decision:", decision)

print("checks:", ", ".join(checks))

Expected output:

decision: human_review_required

checks: voice authorization, synthetic content label

This is not legal advice. It is an engineering checklist that makes product risk visible early.

Learn in This Order

| Step | Read | Practice Output |

|---|---|---|

| 1 | Frontier trends | Name the capability and likely product impact |

| 2 | Ethics and safety | Map copyright, portrait, voice, bias, and misinformation risks |

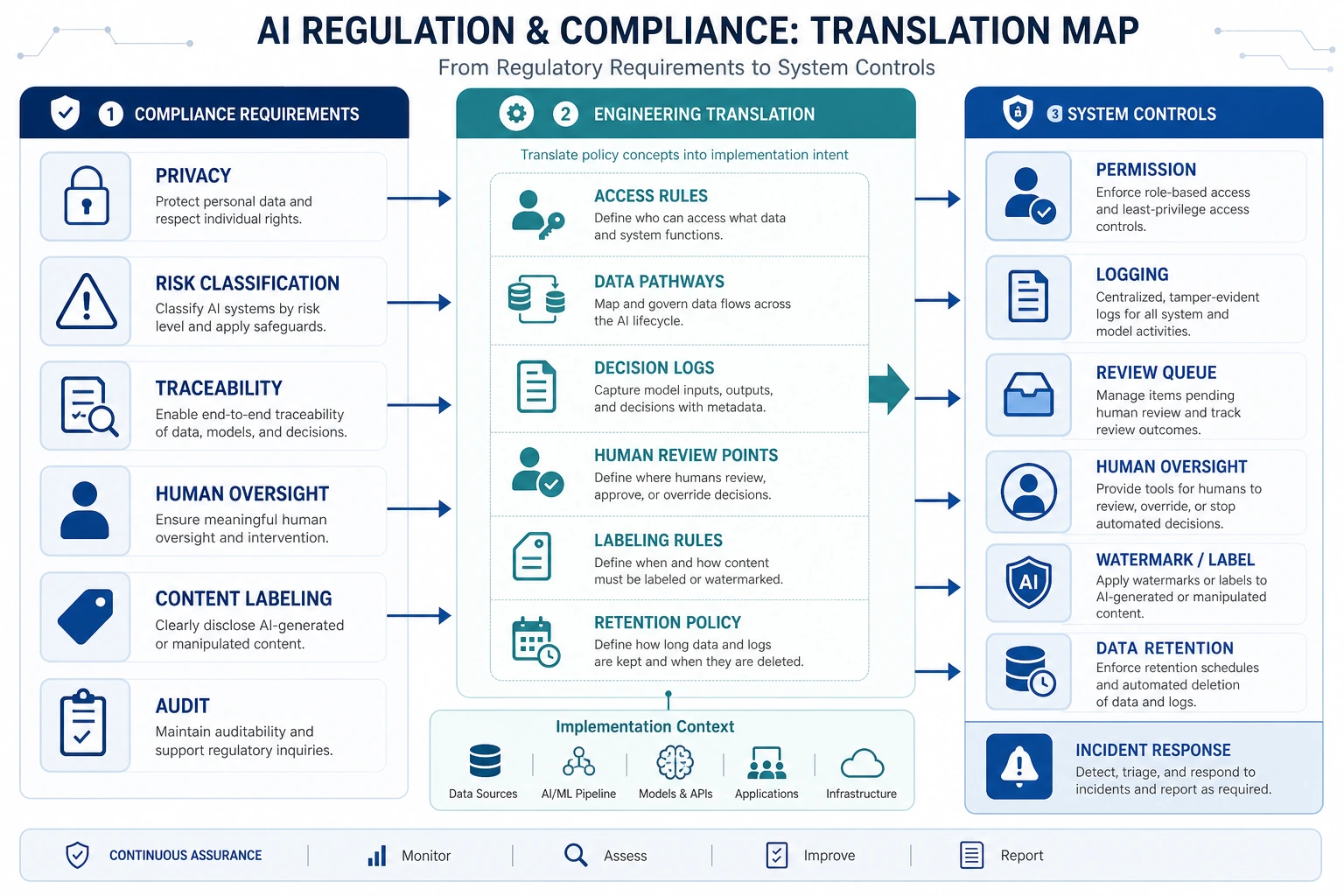

| 3 | Regulations | Convert rules into input checks, review steps, labels, and logs |

Pass Check

You pass this chapter when you can add a risk checklist to one AIGC workflow and explain which cases are blocked, restricted, reviewed, or ready to export.