12.2.6 Latest Progress

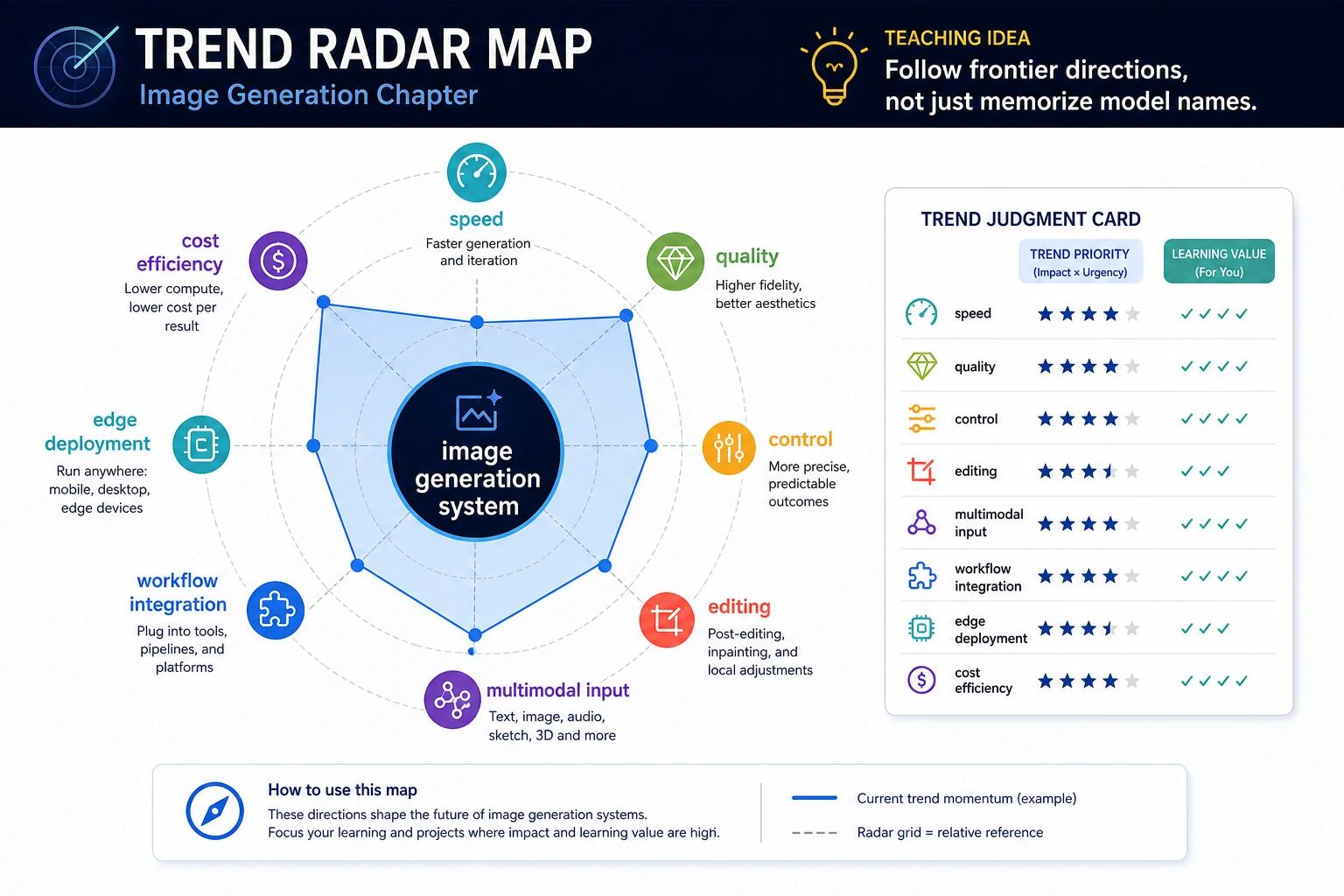

When following the frontier, don’t just chase model names. As you read the chart, separate the directions of “faster,” “more controllable,” “more modalities,” “moving into workflows,” “on-device,” and “cost efficiency” so you can tell which trends are worth learning right now.

A “latest progress” lesson is easy to write in a shallow way. If you only list model names, they’ll be outdated before long; if you only talk about trends, it’s hard for learners to actually gain something concrete.

A more valuable approach is to focus on the main threads that have remained valid over the past few years and are very likely to continue:

- Faster generation

- Stronger control

- More modalities as input

- From single images to complete workflows

This lesson reads the evolution of image generation along these four threads.

Learning objectives

- Understand several stable technical threads in image generation over the past few years

- Learn to distinguish “changes in model names” from “changes in underlying direction”

- Use a runnable example to understand the idea of multi-objective trend ranking

- Build a reading framework for continuing to track this field

Why can’t “latest progress” rely only on memorizing model names?

Because names change fast, while underlying directions change more slowly

The image generation field changes quickly. If you only remember:

- which model is hot right now

- which company released which version

you’ll quickly lose your footing.

A more stable approach is to look at:

- where speed is heading

- where controllability is heading

- where the interaction style is heading

- where workflow integration is heading

An analogy

Reading “latest progress” is more like looking at city road planning, not just remembering which car is fastest today.

- Cars change

- Routes get upgraded

- But the direction of the main roads is often more worth remembering

Main thread 1: Generation is getting faster and faster

Early pain point: beautiful, but slow

What first amazed people about diffusion models was:

- high image quality

- strong semantic alignment

But the pain points were also obvious:

- many sampling steps

- long inference time

Later evolution direction

One obvious thread over the past few years is:

- fewer steps

- higher-quality distillation

- faster sampling paths

This means image generation is no longer just “drawing offline at a leisurely pace,” and is increasingly moving toward:

- interactive generation

- real-time editing

Why is this thread especially important?

Because speed is not just a nice bonus; it directly determines:

- whether users are willing to iterate on prompts

- whether a product can support real-time interaction

- whether costs will spiral out of control

Main thread 2: Controllable generation is getting stronger

From “give a prompt” to “give more conditions”

Early text-to-image experiences often were:

- able to generate the general idea

- but unstable in details

A clear later direction has been to move toward more control conditions, such as:

- pose

- depth

- edges

- region masks

- reference images

- style references

Image editing has become a focus

A very stable trend now is:

- not just generating new images

- but also modifying existing ones

Because in real content production scenarios, users more often need to:

- tweak composition

- change the background

- fix local details

- preserve character consistency

Why does “controllable” feel more like a product capability than “draws better”?

Because content production is not just about a single output sample. What really matters is:

- repeatability

- editability

- predictability

This is also a sign that image generation technology is becoming more productized.

Main thread 3: From single modality to unified multimodality

Inputs are no longer only text

More and more systems now accept combined inputs:

- text

- images

- sketches

- layouts

- region prompts

In other words, generation models are becoming more like visual interaction systems, not just “text to image.”

Outputs are no longer only a single image

The boundary of image generation is expanding outward:

- video

- 3D / multi-view

- layered assets

- UI / product image / design draft assistance

So image generation is gradually becoming less of an isolated track, and more of a convergence point for broader “multimodal content generation.”

Why is this thread worth paying attention to?

Because it will affect how you learn later:

- don’t just focus on diffusion formulas

- start paying attention to interaction interfaces and content pipelines

Main thread 4: From model demos to content workflows

The early common goal: generate one beautiful image

That is of course important, but it is not enough for production environments.

The more realistic goal now

Common real-world needs are actually:

- batch-generate multiple candidates

- keep characters or products consistent

- automatically adapt sizes

- connect with review, asset libraries, and publishing systems

What does this mean?

It means image generation systems are increasingly becoming workflow nodes, not standalone toys.

That is also why you see more and more focus on:

- human-AI collaboration

- editable intermediate results

- asset reuse

- safety review

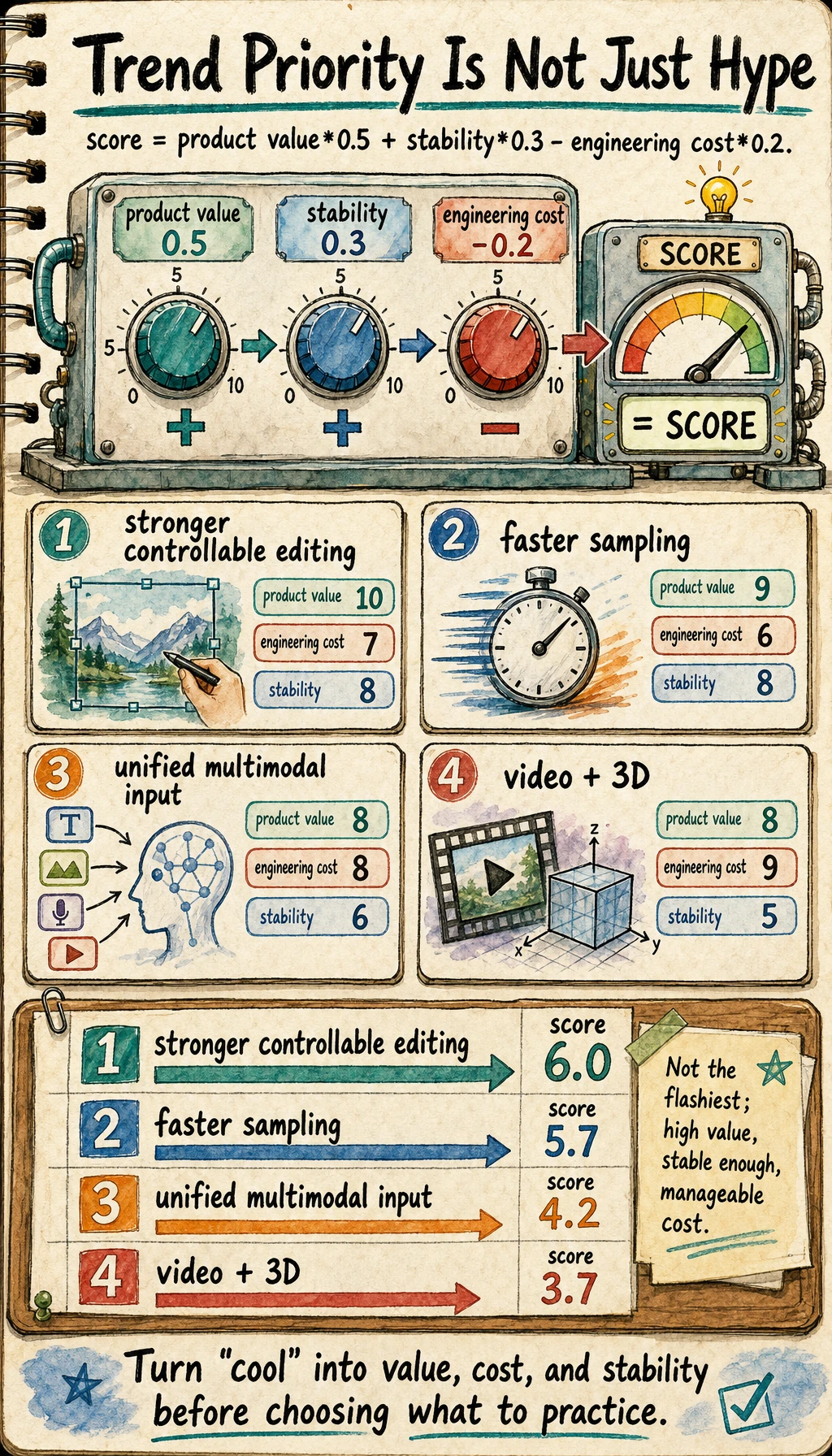

First run a small “trend priority” example

The example below is not meant to simulate a real paper benchmark, but to help you build a very practical habit:

- don’t just look at the direction that sounds the coolest

- also look at its combined value for product, cost, and workflow

trends = [

{"name": "faster sampling", "product_value": 9, "engineering_cost": 6, "stability": 8},

{"name": "stronger controllable editing", "product_value": 10, "engineering_cost": 7, "stability": 8},

{"name": "unified multimodal input", "product_value": 8, "engineering_cost": 8, "stability": 6},

{"name": "from single images to video and 3D", "product_value": 8, "engineering_cost": 9, "stability": 5},

]

def score(item):

return item["product_value"] * 0.5 + item["stability"] * 0.3 - item["engineering_cost"] * 0.2

ranked = sorted(

[{**item, "score": round(score(item), 2)} for item in trends],

key=lambda x: x["score"],

reverse=True,

)

for item in ranked:

print(item)

Expected output:

{'name': 'stronger controllable editing', 'product_value': 10, 'engineering_cost': 7, 'stability': 8, 'score': 6.0}

{'name': 'faster sampling', 'product_value': 9, 'engineering_cost': 6, 'stability': 8, 'score': 5.7}

{'name': 'unified multimodal input', 'product_value': 8, 'engineering_cost': 8, 'stability': 6, 'score': 4.2}

{'name': 'from single images to video and 3D', 'product_value': 8, 'engineering_cost': 9, 'stability': 5, 'score': 3.7}

The exact weights are not universal. The practical lesson is to turn “this trend feels exciting” into a small scoring rule that includes value, cost, and stability.

What is this code trying to convey?

When you truly read “latest progress,” don’t just ask whether a technique is flashy, also ask:

- is its product value large?

- is the engineering barrier high?

- is the stability already good enough?

Why is this more useful than simply listing items?

Because later you won’t just be reading papers, you’ll very likely need to make judgments:

- which direction is worth learning first

- which direction is worth putting into practice first

How should you keep following this field?

Follow “directions” first, not “names” first

Prioritize tracking:

- sampling acceleration

- controllable editing

- multimodal unification

- workflow integration

When reading papers, it helps to ask four questions

- Does it solve a speed, quality, controllability, or workflow problem?

- Does it rely on a new training objective, a new architecture, or a new system design?

- Is it better suited to research demos, or is it already close to being product-ready?

- Will it noticeably change the production process?

The most helpful reading order for beginners

It is recommended to first understand:

- speed

- controllable editing

- workflow integration

Once you understand these three clearly, then go after more frontier areas such as unified multimodality and 3D / video extensions.

Common misunderstandings

Misunderstanding 1: Latest progress means the latest model name

Model names change; the main threads are more worth following.

Misunderstanding 2: The more frontier a direction is, the more suitable it is to learn right away

Not necessarily. Some directions are very frontier, but still quite far from product and engineering deployment.

Misunderstanding 3: Image generation is only about image quality

What matters more and more now is:

- control

- speed

- workflow integration

Summary

The most important thing in this lesson is not to give you a list of model names that will soon become outdated, but to build a more stable framework:

The stable evolution directions of image generation over the past few years are faster sampling, stronger controllable editing, more unified multimodal input, and the shift from single images to complete content workflows.

As long as these four main threads are clear, you won’t be left with only fragmented impressions like “who released another new model” when you continue following this field.

Exercises

- Re-rank these four main threads based on your own understanding, and explain why.

- Think about this: if you were building an e-commerce product image system, which thread would matter most? Why?

- Why is “controllable editing” often more like a product capability than “improving image quality a little more”?

- When you read a new image generation paper next time, which two questions will you ask first?