9.7.6 Multi-Agent Challenges and Solutions

In the previous sections, you saw that multi-Agent systems can divide work, communicate, and coordinate. But once you actually build the system, you’ll discover one reality:

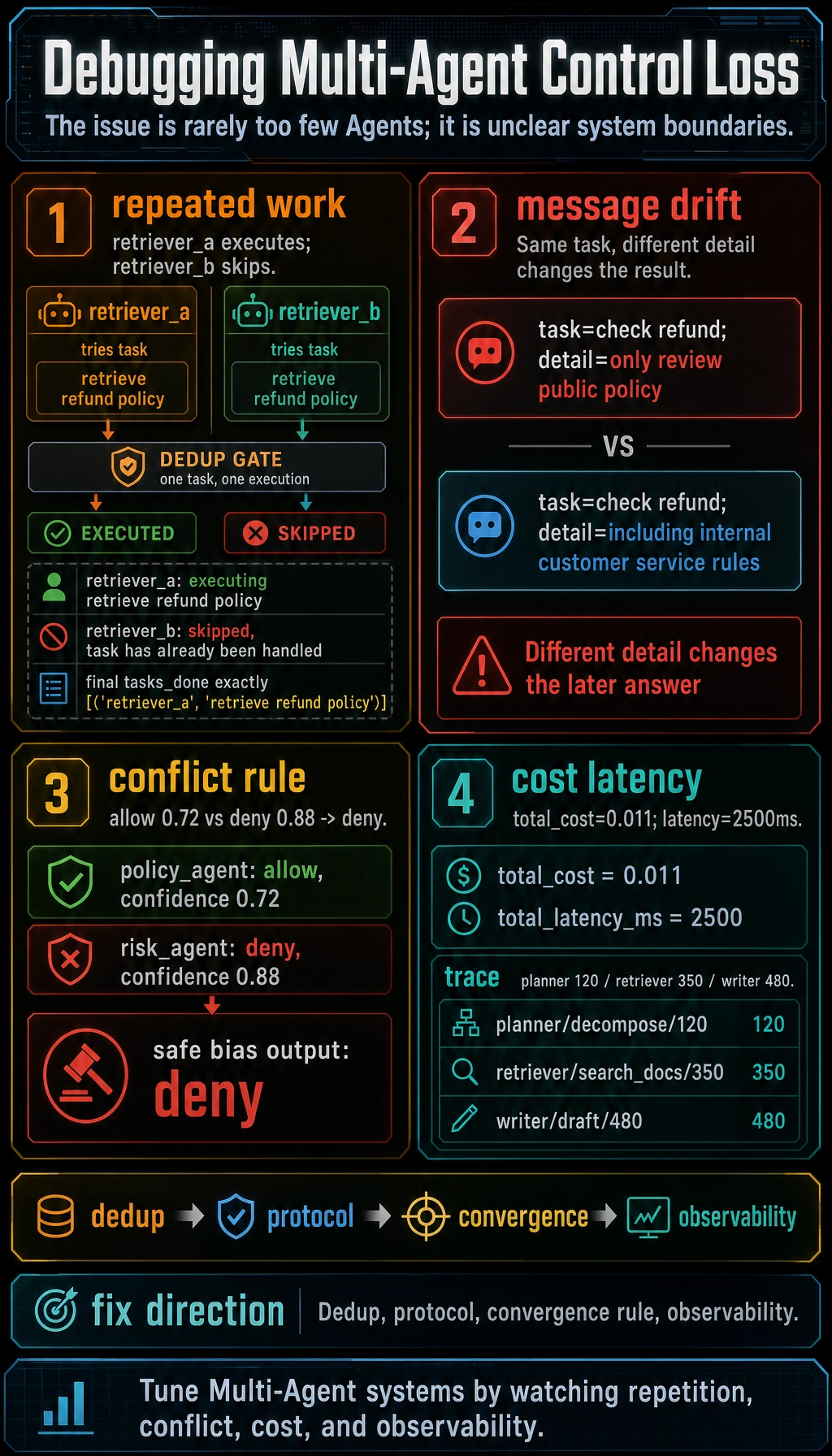

The hard part of multi-Agent systems is not “whether you can spin up more Agents,” but “when the system starts to lose control.”

This section is all about those “loss of control” points.

Learning Objectives

- Understand the most common failure modes of multi-Agent systems

- Learn to break problems down into communication, coordination, cost, and quality

- Read a minimal example of conflict handling and deduplication

- Understand why the key to multi-Agent systems is often not being smarter, but being more controllable

Why Are Multi-Agent Systems More Error-Prone?

The Most Common Problems in a Single Agent

Common single-Agent problems are usually:

- Reasoning mistakes

- Choosing the wrong tool

- Unstable outputs

Multi-Agent Systems Add Another Layer of System Complexity

In addition to errors made by individual Agents, multi-Agent systems introduce new issues:

- Two Agents do the same work twice

- The same message is understood differently by different Agents

- A subtask is completed, but the main task never converges

- Cost and latency stack up layer by layer

In other words:

Multi-Agent = single-agent intelligence problems + distributed coordination problems.

That’s why it sounds more powerful, but in practice it’s often more fragile.

Common Challenge 1: Repeated Work

Why Is Repetition So Easy?

As long as task boundaries are not clear enough, it’s easy to see:

- The planner assigns it once

- The worker does another retrieval on its own

- The reviewer repeats the same check again

A Minimal Example

tasks_done = []

def run_task(agent, task):

tasks_done.append((agent, task))

run_task("retriever_a", "retrieve refund policy")

run_task("retriever_b", "retrieve refund policy")

print(tasks_done)

Expected output:

[('retriever_a', 'retrieve refund policy'), ('retriever_b', 'retrieve refund policy')]

This example is very simple, but it already shows:

Without deduplication, multi-Agent systems can easily “look busy” while actually wasting effort.

A Minimal Fix

assigned = set()

tasks_done = []

def run_task_once(agent, task):

if task in assigned:

return f"{agent}: skipped, task has already been handled"

assigned.add(task)

tasks_done.append((agent, task))

return f"{agent}: executing {task}"

print(run_task_once("retriever_a", "retrieve refund policy"))

print(run_task_once("retriever_b", "retrieve refund policy"))

print(tasks_done)

Expected output:

retriever_a: executing retrieve refund policy

retriever_b: skipped, task has already been handled

[('retriever_a', 'retrieve refund policy')]

Common Challenge 2: Message Distortion and State Desynchronization

Why Does Distortion Happen?

Because what Agents pass around is not the “real world,” but:

- Text messages

- JSON messages

- Intermediate state

Once message formats are inconsistent or fields are unclear, the system can easily end up with:

- I thought you meant A

- But you were actually expressing B

An Example

message_a = {"task": "check refund", "detail": "only review public policy"}

message_b = {"task": "check refund", "detail": "including internal customer service rules"}

print(message_a)

print(message_b)

Expected output:

{'task': 'check refund', 'detail': 'only review public policy'}

{'task': 'check refund', 'detail': 'including internal customer service rules'}

These two messages differ by only a little, but the impact on the result can be huge. If the system does not constrain the message protocol, it can easily drift off course later.

An Engineering Lesson

As soon as a system starts using fields like:

taskdetailcontextnotes

with vague semantics, you should be alert to whether the communication design is already loosening up.

Common Challenge 3: How Do You Converge Conflicting Conclusions?

Multi-Agent Systems Easily Reach Different Conclusions

For example:

- A policy Agent says “allowed”

- A business rules Agent says “not allowed”

This is not an exception; it’s the norm.

A Minimal Conflict Example

results = {

"policy_agent": {"decision": "allow", "confidence": 0.72},

"risk_agent": {"decision": "deny", "confidence": 0.88}

}

print(results)

Expected output:

{'policy_agent': {'decision': 'allow', 'confidence': 0.72}, 'risk_agent': {'decision': 'deny', 'confidence': 0.88}}

Conflict Resolution Must Define at Least One Rule

The simplest and most common rules are:

- Highest confidence wins

- Reviewer makes the final decision

- Supervisor makes the final decision

- Conservative bias first (common for high-risk tasks)

For example, a conservative-bias version:

Continue in the same file or interpreter session after the conflict example so results is already defined.

def resolve_with_safe_bias(results):

decisions = [r["decision"] for r in results.values()]

if "deny" in decisions:

return "deny"

return "allow"

print(resolve_with_safe_bias(results))

Expected output:

deny

If you do not design a convergence rule, the system becomes:

Multiple Agents are all working hard, but nobody can make the final call.

Common Challenge 4: Costs and Latency Grow Exponentially

Why Does Multi-Agent Get Expensive So Quickly?

Because every additional Agent usually adds another layer of:

- Inference cost

- Context assembly

- State passing

- Tool calls

A Very Intuitive Example

agents = [

{"name": "planner", "cost": 0.002, "latency_ms": 400},

{"name": "researcher", "cost": 0.003, "latency_ms": 700},

{"name": "writer", "cost": 0.004, "latency_ms": 900},

{"name": "reviewer", "cost": 0.002, "latency_ms": 500},

]

total_cost = sum(a["cost"] for a in agents)

total_latency = sum(a["latency_ms"] for a in agents)

print("total_cost =", total_cost)

print("total_latency_ms =", total_latency)

Expected output:

total_cost = 0.011

total_latency_ms = 2500

If these steps are still executed serially, the overall latency becomes even more noticeable.

A Very Important Engineering Judgment

Many times, the biggest problem in multi-Agent systems is not poor quality, but:

A 10% quality improvement, but a 3x increase in cost and latency.

So you must consciously ask:

- Is this step really worth keeping?

- Can two roles be merged?

- Can the reviewer be triggered only for high-risk tasks?

Common Challenge 5: The System Is Not Observable

Why Is This a Big Problem?

Once a multi-Agent system fails, if you can only see the final answer, you probably have no idea:

- Which Agent made the mistake

- Whether the problem was in communication, assignment, or tools

- Who first pushed the system off track

At Minimum, Record These Pieces of Information

- task_id

- agent_name

- action

- input summary

- output summary

- latency

A minimal trace example:

trace = [

{"task_id": "t1", "agent": "planner", "action": "decompose", "latency_ms": 120},

{"task_id": "t1", "agent": "retriever", "action": "search_docs", "latency_ms": 350},

{"task_id": "t1", "agent": "writer", "action": "draft", "latency_ms": 480}

]

for item in trace:

print(item)

Expected output:

{'task_id': 't1', 'agent': 'planner', 'action': 'decompose', 'latency_ms': 120}

{'task_id': 't1', 'agent': 'retriever', 'action': 'search_docs', 'latency_ms': 350}

{'task_id': 't1', 'agent': 'writer', 'action': 'draft', 'latency_ms': 480}

Without this kind of trace, debugging a multi-Agent system becomes extremely difficult.

Common Challenge 6: Role Boundary Drift

What Is Role Boundary Drift?

Originally:

- The planner is responsible for breaking down tasks

- The writer is responsible for writing answers

But gradually, the system becomes:

- The planner also starts retrieving

- The writer also starts judging task priority

In the end, every role becomes more and more like an “all-purpose Agent.”

Why Is This Dangerous?

Because it will make:

- Responsibilities blurry

- Debugging harder

- Responsibility boundaries disappear

So you should regularly check a multi-Agent system:

Has this Agent’s responsibility already gone out of bounds?

A More Practical “Challenge Checklist”

If you are building a multi-Agent system, this checklist is very useful:

| Problem | Common Symptoms |

|---|---|

| Repeated work | Multiple Agents do the same thing |

| Message distortion | Different understanding of the same task |

| Conflict does not converge | Multiple conclusions, nobody makes the final call |

| Costs too high | Too many roles, each step too long |

| State desynchronization | Someone keeps working based on old information |

| Hard to debug | You only see the final output, not the intermediate process |

Before adding more Agents, check whether repeated work, message drift, unresolved conflicts, cost growth, or missing trace data is the real source of instability.

The Solution Is Not “More Complex,” but “Clearer”

When many people run into problems, their first reaction is:

- Add another coordination Agent

- Add another judge Agent

- Add another summarization Agent

But the direction that makes a multi-Agent system truly more stable is often not to keep stacking roles, but to make things clearer:

- Clearer messages

- Clearer division of labor

- Clearer termination conditions

- Clearer observation methods

In other words:

Fixing multi-Agent systems is often not about “adding more complexity,” but about “drawing the boundaries clearly again.”

Summary

The most important thing in this section is not just listing the challenges, but understanding this:

The real difficulty in multi-Agent systems is not the capability of a single Agent, but whether the system as a whole can converge, be observed, and be controlled.

Once you start looking at multi-Agent systems through the four categories of “repetition, conflict, cost, and observability,” system tuning becomes much clearer.

Exercises

- Redesign the conflict resolution logic in this section with a “reviewer makes the final call” version.

- Think about it: if a multi-Agent system keeps retrieving the same information over and over, would you first change task assignment, the communication protocol, or shared state?

- Design your own multi-Agent trace structure, including at least

task_id,agent,action, andlatency_ms. - Explain in your own words: why, when a multi-Agent system has problems, is it often not because “the model is too weak,” but because “the system boundaries are unclear”?