9.2.1 Reasoning Roadmap: Plan, Act, Check

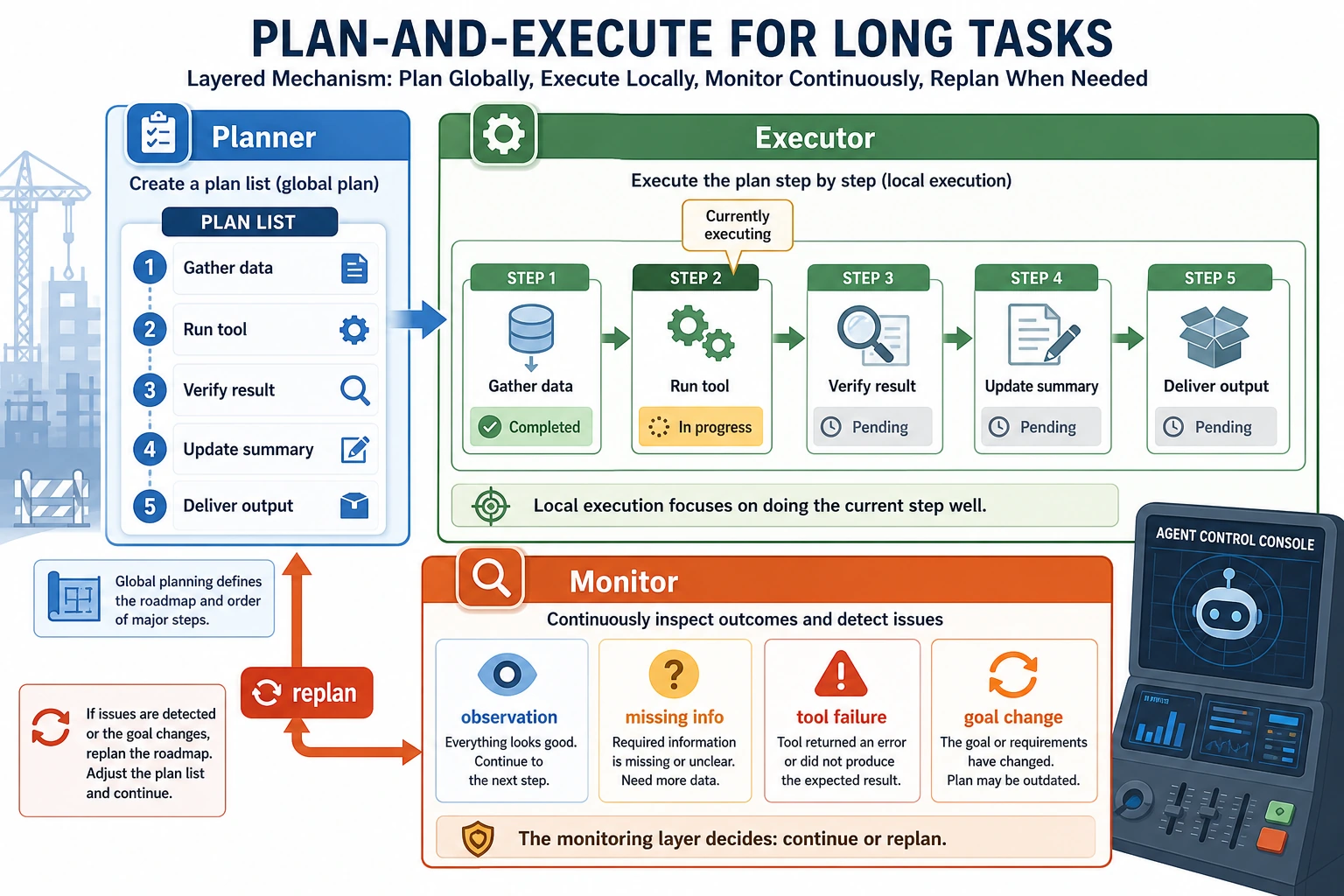

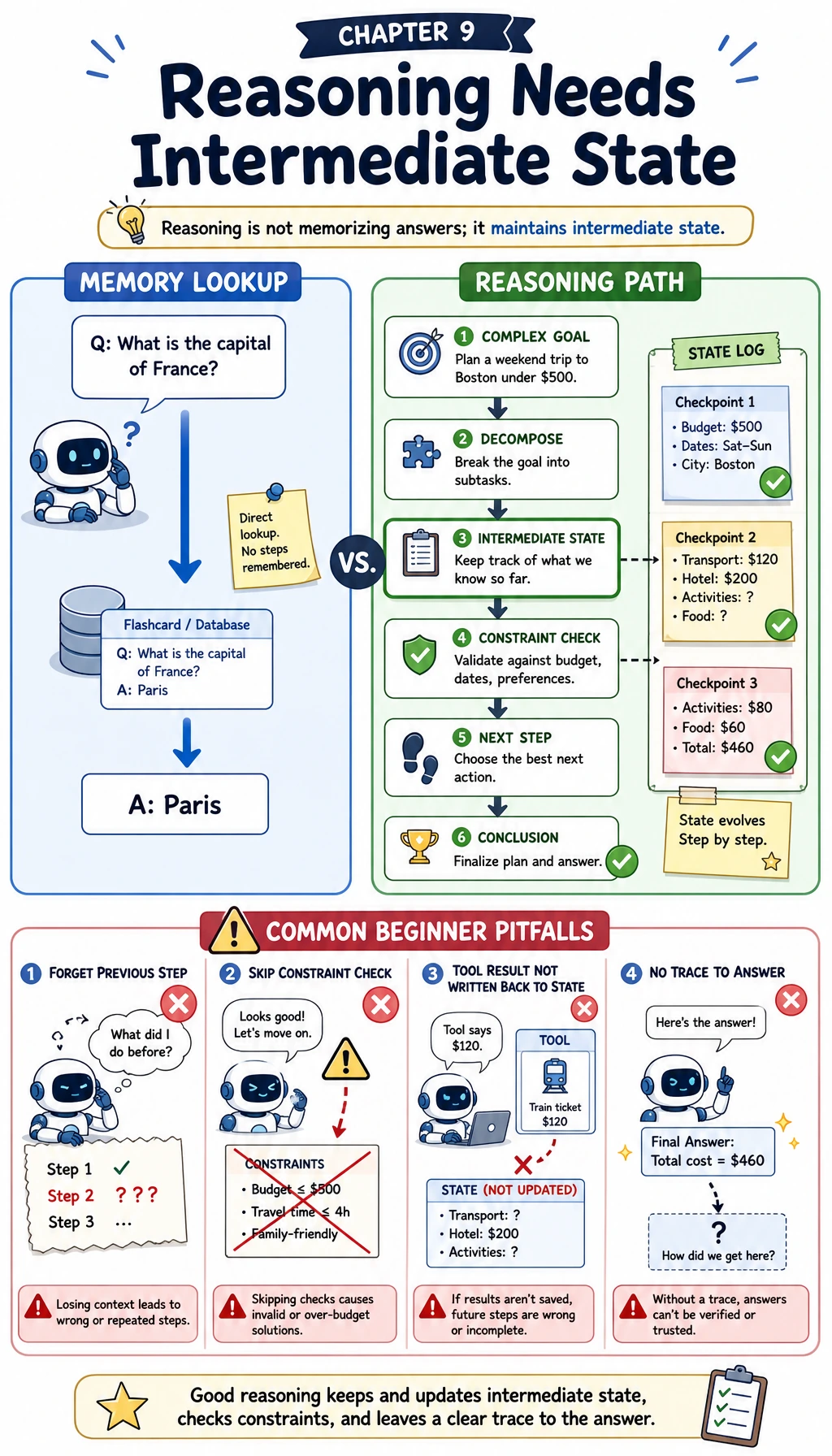

Agent reasoning is not a longer answer. It is the ability to create usable intermediate steps, decide what to do next, and check whether the plan is still working.

See the Planning Loop First

The core habit is: plan a step, act, observe the result, checkpoint state, and replan when the result changes the situation.

Run a Plan Checklist

Use explicit steps before adding tools. A plan you cannot print is hard to inspect.

task = "prepare a cited RAG demo answer"

plan = ["inspect question", "retrieve sources", "draft answer", "check citations"]

print("task:", task)

for index, step in enumerate(plan, start=1):

print(f"{index}. {step}")

print("checkpoint:", plan[-1])

Expected output:

task: prepare a cited RAG demo answer

1. inspect question

2. retrieve sources

3. draft answer

4. check citations

checkpoint: check citations

Good planning is visible. It should make failures easier to locate, not hide them behind a final paragraph.

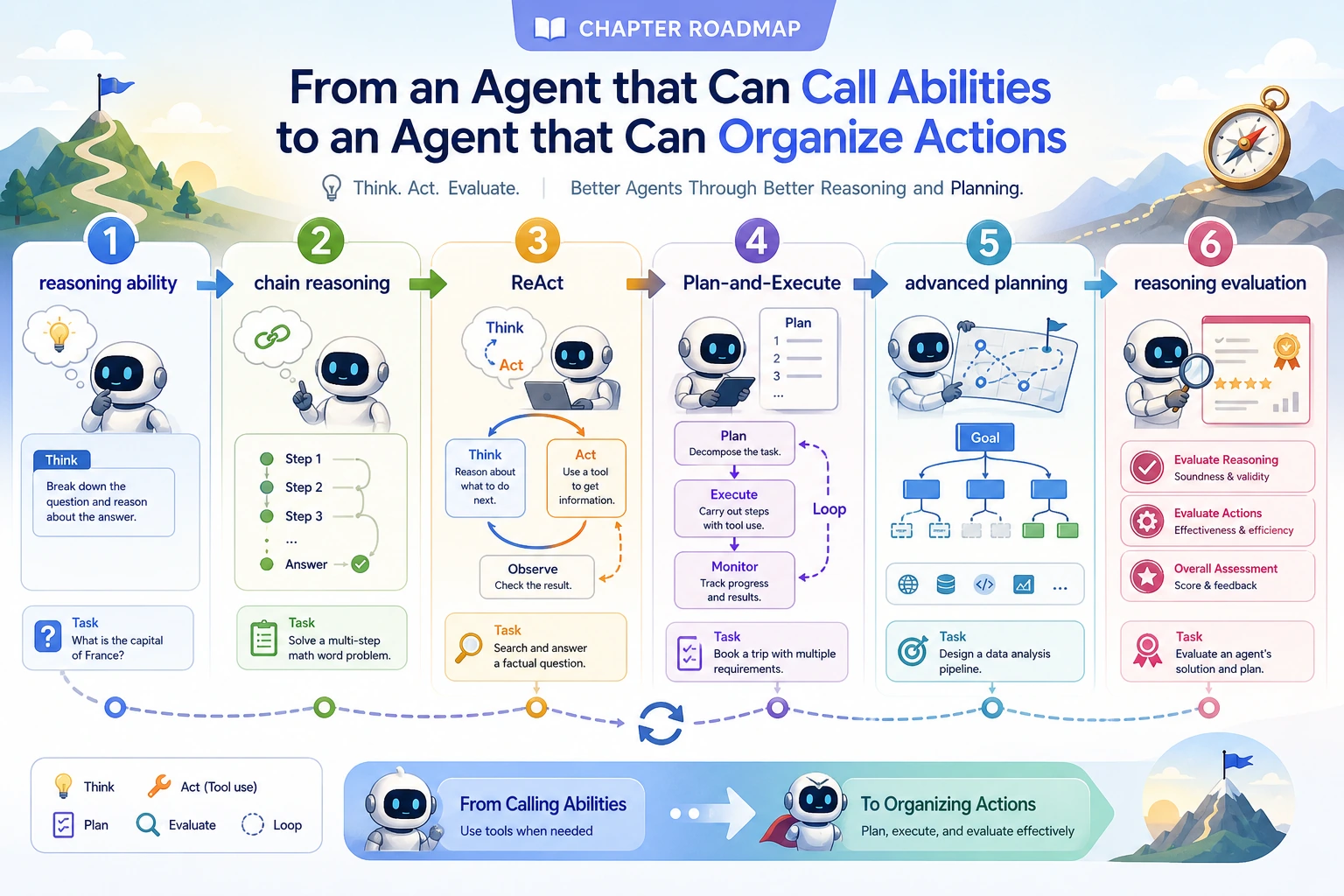

Learn in This Order

| Step | Read | Practice Output |

|---|---|---|

| 1 | LLM reasoning | Distinguish knowing an answer from deriving a path |

| 2 | Chain reasoning | Create intermediate states and self-check points |

| 3 | ReAct | Interleave thought, action, observation, and next step |

| 4 | Plan-and-Execute | Separate planning from execution when tasks grow |

| 5 | Advanced planning | Handle dependency, priority, rollback, and replan |

| 6 | Reasoning evaluation | Score final result, path quality, and failure type |

Pass Check

You pass this chapter when you can explain why a plan failed: bad decomposition, wrong tool choice, stale observation, missing checkpoint, or weak final verification.

The exit mini project is a visible reasoning trace for one task: plan steps, observations, replans, and the final answer.